Technical SEO

Elevate your technical SEO game with clear, actionable ideas to improve your website's search presence.

-

Let's get technical

What is technical SEO? Technical SEO encompasses the nuts and bolts of your website; the underlying code, proper organization, structuring and tagging of content, appropriate metadata, and site speed. Basically, technical SEO is everything but the website content and backlinks.

Optimizing your website's technical SEO provides a solid foundation for your content to shine, and, most importantly, lets your website be easily understood by web crawlers—the robots that search engines use to figure out what your website is about.

-

Metadata

Metadata is extra information that describes your website or web page and helps web crawlers quickly understand your content. Properly annotating your website with metadata lets you control to a large extent how your pages are displayed in Google's search results, and how they appear when shared on platforms like Facebook and Twitter.

-

Title tag

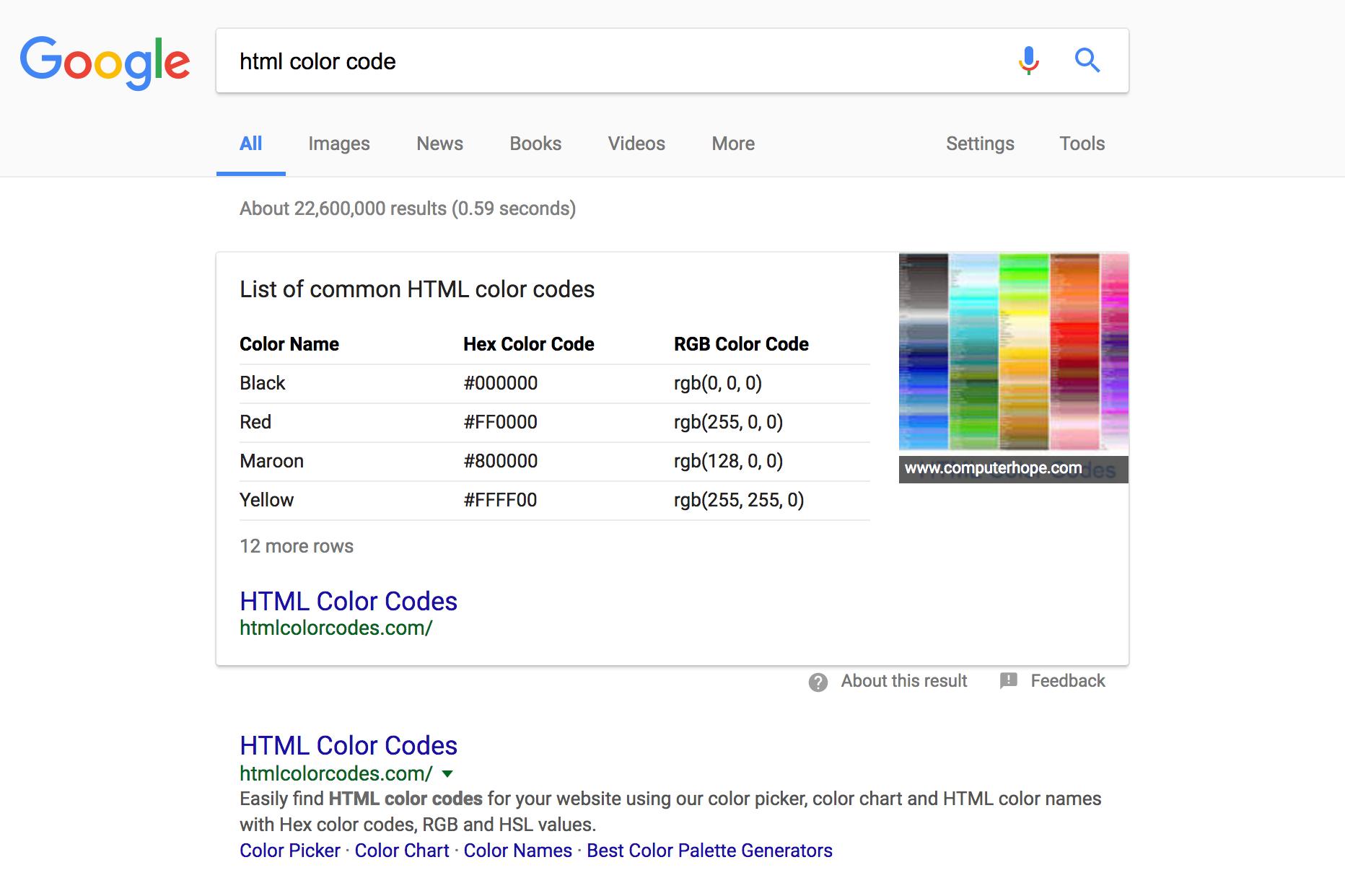

The title tag is arguably the most important meta tag, and what Google uses to display your page's title in the search results (the blue text below).

The HTML code is very simple; if you're implementing it yourself, make sure to place it inside the <head> of your document.

<title>Your page title</title>The recommended length for a title tag is between 50–60 characters. You can use a word counter to help determine the optimal text for your title tag.

-

Description tag

The description tag is an opportunity to summarize, albiet briefly, what your web page is about. Google may display it below your page's title and url in the search results, depending on what the search term is and how relavent it believes the passage to be compared with other text on your page.

Again, the HTML is pretty straightforward, and the description tags also belongs inside the <head> of your document. Change the text inside the "content" attribute to your desired description.

<meta name="description" content="Your page description">A good rule of thumb is to try and keep your description text under ~300 characters. That's about the longest length Google will display in the search results, and anything longer will most likely be truncated 🔪.

-

Open Graph (Facebook) tags

Open Graph is a protocol developed by Facebook to "enable any web page to become a rich object in a social graph." For SEO purposes we're focusing on one of the most common use cases: properly formatting your web page for sharing on Facebook.

Tip

Optimizing social cards can increase engagement, which Google uses as a positive ranking signal.

The image above depicts how this website would look when shared on Facebook with properly tagged metadata. Adding the Open Graph tags below to the <head> of your website will also help you control how your content is displayed when someone shares it on Facebook.

<meta property="og:site_name" content="Your Website Name"> <meta property="og:type" content="website"> <meta property="og:url" content="https://example.com/your-page-url"> <meta property="og:title" content="Your Page Title"> <meta property="og:description" content="Your page description"> <meta property="og:image" content="/your-page-image.jpg">Be sure to replace the "content" attribute of each of the tags with your own information. You can test if your tags are implemented correctly using Facebook's debugging tool. Note, sometimes your image won't show up the first time Facebook scrapes the page; try clicking "scrape again" before pulling all your hair out. 😉

-

Twitter tags

Similar to Facebook's Open Graph tags, Twitter has their own metatags you can use to control the appearance of your website when shared on their platform.

Tip

Twitter's cards let you specify different media types including text, image, video, and app.

Our favorite is the "summary card with large image" type (image above, code below), but depending on your page media you may prefer a different style. You can learn more about Twitter's cards on their developer website.

<meta property="twitter:domain" content="example.com"> <meta property="twitter:card" content="summary_large_image"> <meta property="twitter:url" content="https://example.com/your-page-url"> <meta property="twitter:title" content="Your Page Title"> <meta property="twitter:description" content="Your page description"> <meta property="twitter:image" content="/your-page-image.jpg">After adding the tags on your website you can check if they're implemented correctly using Twitter's card validator.

-

Viewport tag

Web browsers use the viewport tag to properly scale a website across different size screens. Google recognizes and rewards responsive sites, so making sure your site is zoomed correctly on mobile and tablet devices can have a positive affect.

<meta name="viewport" content="width=device-width, initial-scale=1"> -

Canonical link

Canonical links tell search engines which version of a particular website or web page URL is the definitive version. Here is an example:

Let's say your website URL is https://example.com. Now, another website links back to your site using https://www.example.com (note the "www"). From a technical perspective, these are two different URLs, and unless you've declared a canonical URL in your web page, Google may not be able to distinguish which one is the most authoritative.

In some cases this results in a website being penalized because the search engine thinks there are multiple sites with duplicate content. To avoid this, make sure you define a canonical URL in the <head> of every page of your website, like below:

<link rel="canonical" href="https://example.com/your-page-url">

-

-

Markup

Markup (in most cases HTML), constitutes the bulk of your web pages, and structuring it using the appropriate tags can help search engines identify the most important elements on your page. This is essential if you're trying to rank for particular keywords or topics, where you want to surface those on your pages above all else.

-

Header tags (<h1>, <h2>, ...)

Text headings are crucial in highlighting your most important keywords, and the king of them all is the <h1> tag. Every page should have one, and only one, <h1> tag. This is your page title, and should include your most important keyword(s).

<h1>Your Page Heading with Target Keyword</h1> ... <h2>Your 1st Page Section Heading</h2> ... <h2>Your 2nd Page Section Heading</h2> ... <h3>Your 2nd Page Section 1st Subheading</h3> ... <h3>Your 2nd Page Section 2nd Subheading</h3> ...Subheadings, or <h2> tags, are often used to break a page into sections, and while also important, are not as heavily weighted as the <h1> in terms of SEO. These sections can subsequently be further segmented using <h3> through <h6> tags which generally carry less weight and are primarily used for page structuring.

-

Lists and tables

Lists and tables are vastly underestimated HTML elements, and when used properly can really help search engines index and understand your content. Case in point, Google will sometimes use tabular or list based data in it's Featured Snippets like below:

If you have some content that might perform better in table or list format, it's worth a shot as it could give your SEO a boost. You can checkout the original table of color codes for yourself on HTML Color Codes, towards the bottom of the page.

-

Semantic HTML tags

Semantic HTML tags are useful for both humans and bots, as they convey extra meaning about their contents. Commonly used semantic tags include <nav>, <main>, <article>, <header>, <section> and <footer>.

<body> <header> <nav></nav> </header> <main> <article> <header></header> <section></section> <footer></footer> </article> </main> <footer> </footer> </body>Above is a basic page structure that you might find useful as a prototype for building out your own web pages. Note how both the outer <body> and the inner <article> each have their own <header> and <footer>. As far as HTML goes, this is totally valid, and encouraged as it yields semantic cues to crawlers about the contents of your page.

-

Page breadcrumbs

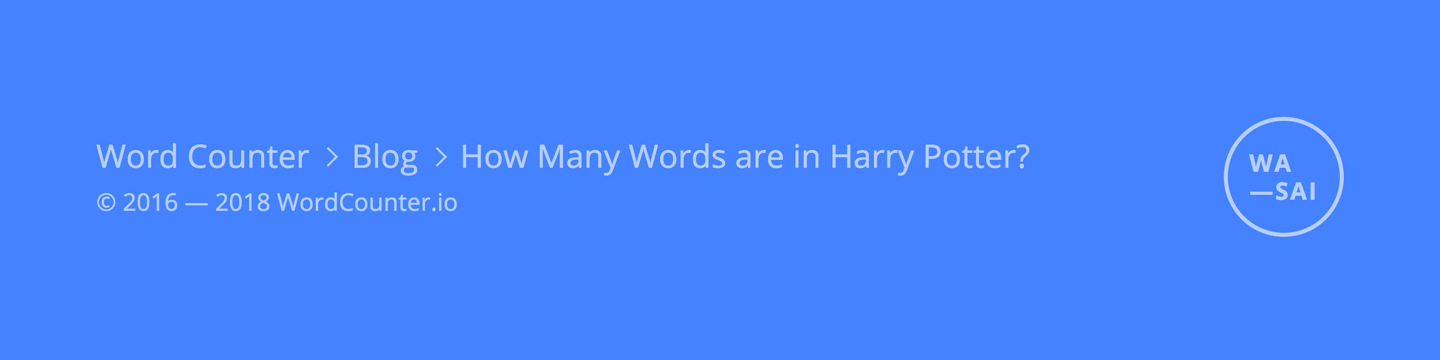

Breadcrumbs are used to indicate where a visitor currently is within the larger context of a website. They're like a trail you leave behind as you visit pages within a website so you can get back to where you were previously. Breadcrumbs often follow a website's URL structure, but not always.

Tip

Breadcrumbs help your visitors and search engines understand your site organization.

In the example above, a visitor is on the page How Many Words are in Harry Potter, which is identified in the breadcrumbs as being a child of the blog, which in turn is a child of the website itself.

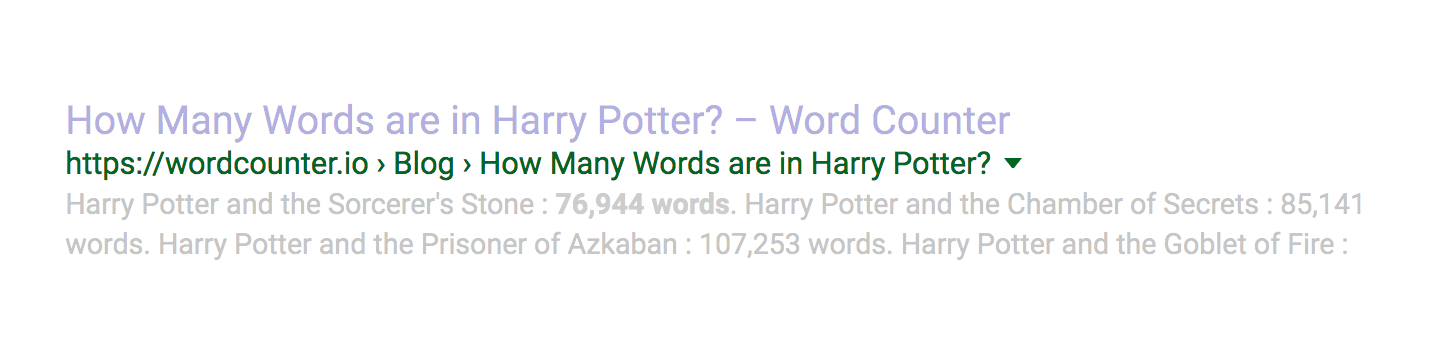

Breadcrumbs can also help search engines understand your website, and if tagged appropriately may even be featured in search results, like below:

If you want to learn more about integrating breadcrumbs into your website, Google (surprise) has some useful examples in their developer resources on breadcrumbs.

-

-

Site Architecture

Structuring your site effectively can make it much easier for web crawlers (and humans) to understand what your web pages are about, and how each fits into the website as a whole.

As you probably guessed, site architecture is easiest at the beginning of a project, however, websites are always evolving so it's never too late to step back and formulate a better strategy.

-

Organize content

Most websites have a purpose, and that purpose typically revolves around one or more topics or themes. When structuring a website, a popular and proven SEO strategy is to "silo" content around these themes, especially if there are multiple.

What this means is when you're mapping out your site's structure, try to group similar pages, content, or resources under unified headings, as this will help search engines—and of course your audience—more easily index and navigate the site.

Tip

Try to keep your content at most 2–3 directories below your main page, and never go beyond 3.

<!-- Dogs --> pets.com/dogs ↳ pets.com/dogs/beagle ↳ pets.com/dogs/bulldog ↳ pets.com/dogs/airedale ... <!-- Cats --> pets.com/cats ↳ pets.com/cats/persian ↳ pets.com/cats/burmese ↳ pets.com/cats/siamese ...For example, say you're building a website about pets. Since there are many kinds of pets, you could break your website up into sub-groups (or "silos") like those shown above. It's a bit like building topic-focused micro sites within your main website.

By logically structuring your website using silos—in this case dogs and cats—and creating internal links only between pages within a silo you create a feedback loop that boosts the relavancy for both the topic and sub-topics, which in turn can help you rank higher.

Siloing can seem a bit complicated, and we're just scratching the surface. If you want to dig deeper read Bruce Clay's original article on SEO silos, and Authority Hacker's more recent post on site architecture.

-

Structure URLs

URL structure will vary depending on your overall site organization, but there are some best practices you can implement regardless of whether you're building a brand new site or updating an existing one.

<!-- Well Structured URLs --> example.com/meaningful-page-title-with-keyword <!-- Poorly Structured URLs --> example.com/6758476 exmaple.com/post?id=9441&string=keyword example.com/dir/Ix87fgtU#use_modal&s="word"Generally, you'll want to keep your URLs short and legible, without sacrificing readability. Make them semantic, and use dashes between words (not underscores). Many search engines take URL wording into account as part of their ranking algorithms, so try and avoid using IDs or other anonymized strings as these carry no keyword relavency.

-

Build a sitemap

A sitemap is an XML file that lists and prioritizes all the pages on your website you want a search engine to index. It's like a shortcut for web crawlers, and can speed up the indexing process.

<urlset xmlns="http://www.sitemaps.org/schemas/sitemap/0.9"> <url> <loc>https://example.com</loc> <changefreq>daily</changefreq> <priority>1.0</priority> <lastmod>2018-03-08</lastmod> </url> <url> <loc>https://example.com/page</loc> <changefreq>weekly</changefreq> <priority>0.75</priority> <lastmod>2018-03-08</lastmod> </url> <url> <loc>https://example.com/page/sub-page</loc> <changefreq>monthly</changefreq> <priority>0.5</priority> <lastmod>2018-03-08</lastmod> </url> ... </urlset>Sitemaps are simple, with each block containing a URL, how frequently that page changes (hourly, daily, weekly and others), the page's priority within your website (from 0 to 1) and the last time the page was updated in W3C Datetime ISO 8601 format.

Typically accessed from the root domain, a sitemap's URL will look something like this: example.com/sitemap.xml.

-

Have a robots.txt file

Search engines use web crawlers or bots to visit and catalog your site, and a robots.txt file lets them know what pages they can or cannot crawl, and how frequently they should access your content.

User-agent: * Sitemap: https://example.com/sitemap.xmlFor most sites the above code will suffice, letting any web crawler visit all pages on a website, and providing an explicit link to a sitemap where those pages are listed. If you'd like to learn more Moz has a great robots.txt article digging deeper into all the different customizations available and what they mean.

-

Use an SSL certificate

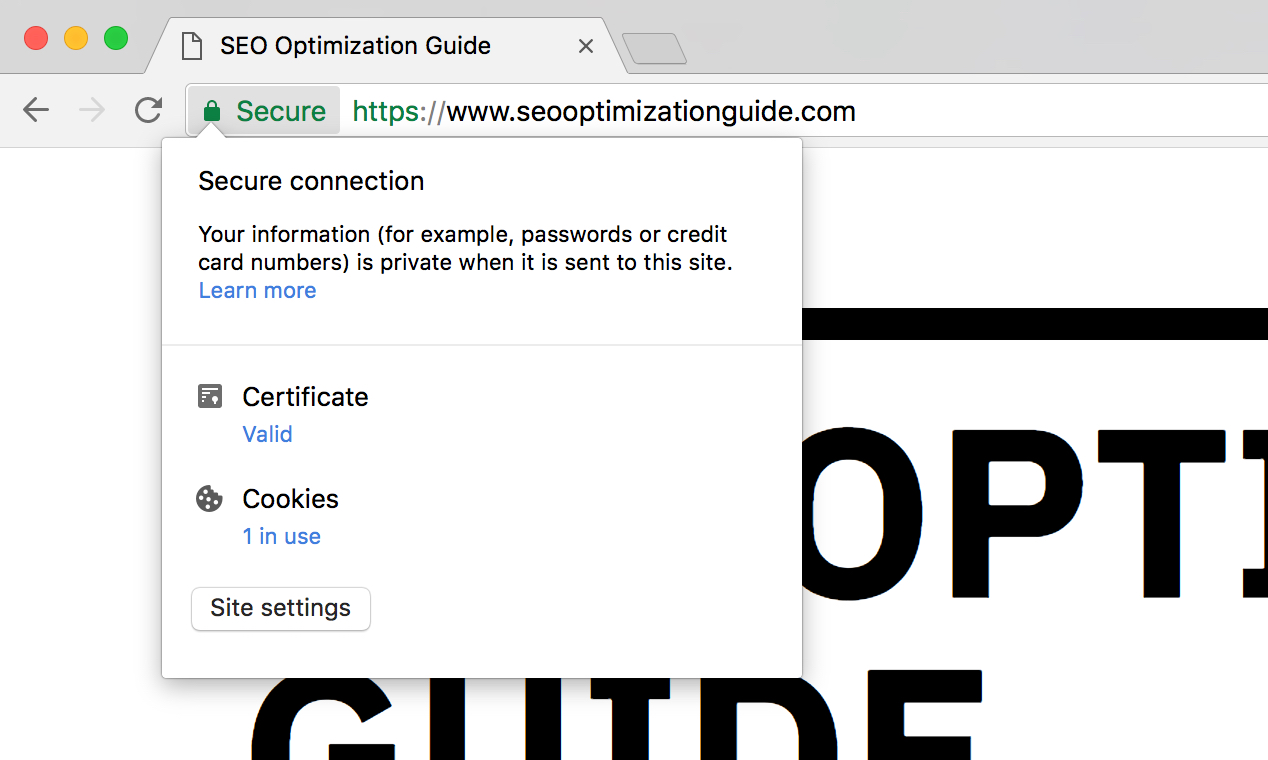

Secure Socket Layer or SSL certificates secure data transfer over a website's network connection. They're what give websites the "https" in front of their URLs, and are used as a positive ranking signal by Google.

Even if your website doesn't involve credit card tansactions or store sensitive data it's still a good idea to have an SSL certificate.

If you look at the search bar in your browser you can see our SSL certificate in action. Chrome, Firefox and Safari users will see a green or gray lock next to the URL indicating the page is secure, while IE/EDGE displays the entire search bar in green.

-

-

Site Speed

Search engines use site speed—the time it takes your website to load—as a ranking signal, and it's a no-brainer that faster sites rank better. Here are some steps you can take to help boost your website's performance.

-

Bundle and minify assets

Page load time can be significantly sped up by reducing network requests. Every individual file your web page loads requires a separate request, and these can quickly add up.

A surefire way to reduce loadtime is by concatenating your JavaScript and CSS assets into as few files as possible. Minifying (i.e. compressing) these concatenated files can further reduce load time.

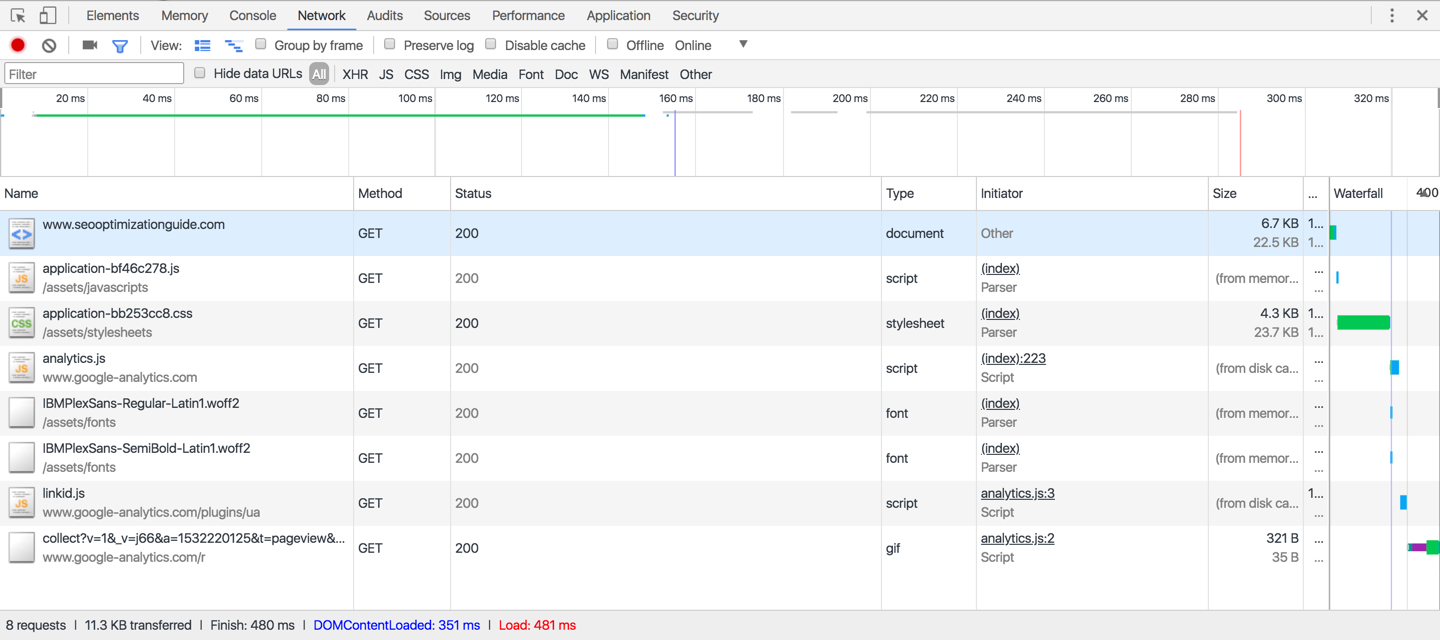

Using Chrome's developer tools we can see that this site's homepage takes 480ms to load makes only 8 requests: 1 for the HTML document, 1 for the JavaScript file, 1 for the CSS file, 2 for font files and 3 for Google Analytics resources. 🔥

-

Enable GZIP compression

You can squeeze some extra bytes from your files by enabling GZIP compression, which will work on both your assets (.js/.css) as well as your HTML pages. It's essentially zipping your HTML and assets like you would a normal desktop file, removing many of the redundant tags and formatting.

If you're intrerested in learning more Better Explained has an extensive rundown on the background, advantages and implementations of GZIP compression.

-

Compress and resize images

Images are bandwidth hogs and can often make up the majority of a page's total size. Compressing images and making sure you're serving the appropriate size for your user's device is key for a performant website.

If your site is image heavy a great way to handle compression is by using a real time image processing service like imgix to resize and compress images on the fly.

-

Use a CDN

A CDN, or Content Delivery Network, will boost your page speed by caching your static files (assets and media) in multiple locations around the world.

Combining an image processing service like imgix (which also hosts and caches your images) with a CDN like Amazon CloudFront for your static assets, you can make sure your website is blazing fast anywhere just about in the world.

-

Set Expires Headers

Expires headers are used by web browsers to determine whether a resource (like an image) needs to be fetched from the site's origin server or if a locally cached version can be rendered instead.

By setting expires headers far in the future (say, a year from now) on resources that change infrequently you can significantly speed up the load time of your website for returning visitors.

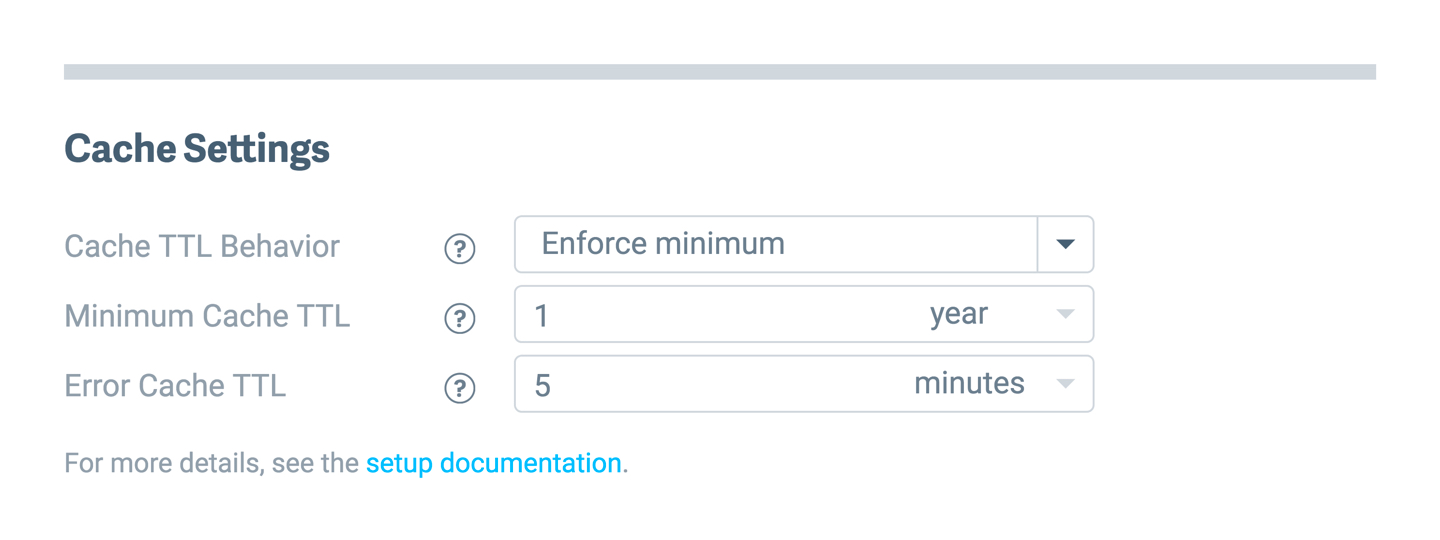

Figuring out how to set expires headers can be a bit complex. Fortunately, most cloud image hosts provide built in mechanisms to easily set them from your dashboard. Imgix (pictured above) lets you input a minimum cache period from their management console.

-

Test your page speed

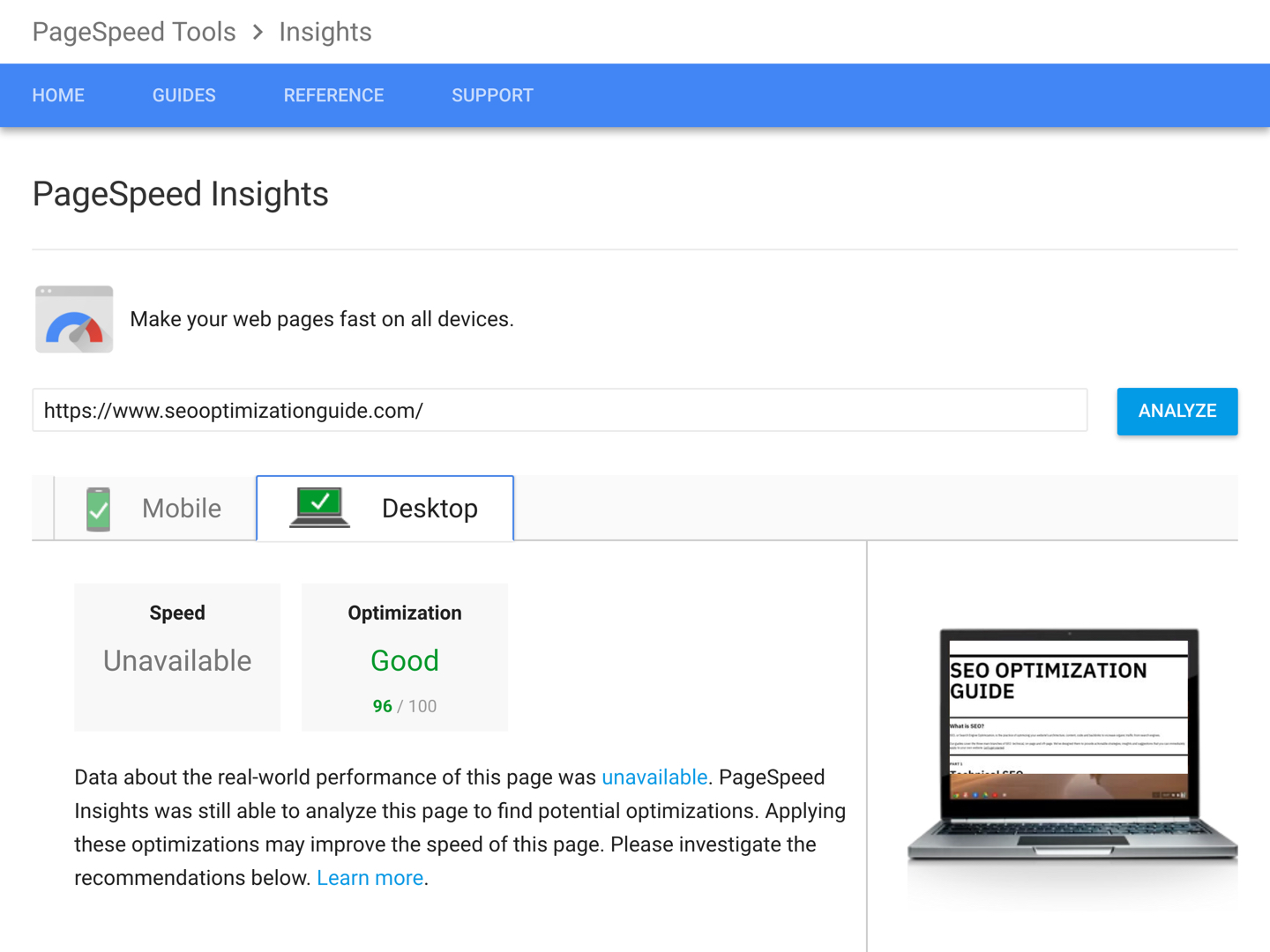

Once you've optimized your site's HTML, images, and assets, test it using Google's PageSpeed Insights. Generally, anything with a score above 80 is pretty well optimized.

Note, it's impossible to get a perfect 100 on the test if you're using any of Google's other products like analytics or third-party scripts, as they dock you for not leveraging browser caching, which is out of your control. So don't sweat it. 😓

-